Basic Three Layer Neural Network in Python

Introduction

As part of understanding neural networks I was reading Make Your Own Neural Network by Tariq Rashid. The book itself can be painful to work through, as it is written for a novice, not just in algorithms and data analysis, but also in programming. Although the code is a verbatim transcription from the text (see Source section), I published it to better understand how neural networks are designed, made easy by the use of a Jupyter Notebook, not to present this as my own work, although I do hope that this helps others develop their talents with data analytics.Github Source: AzureNotebooks/Basic Three Layer Neural Network in Python.ipynb at master · JamesIgoe/AzureNotebooks (github.com)

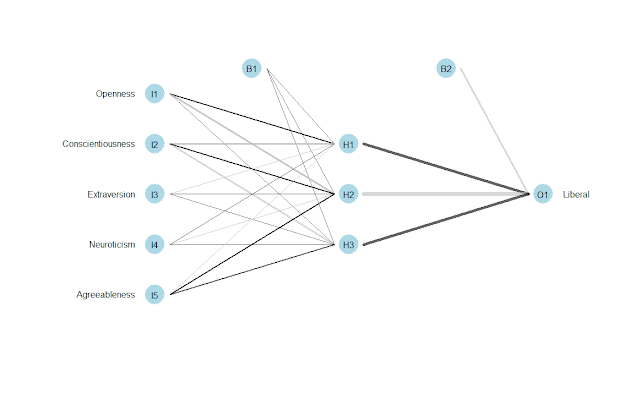

Overview

The code itself develops as follows:Constructor

- set number of nodes in each input, hidden, output layer

- link weight matrices, wih and who

- weights inside the arrays are w_i_j, where link is from node i to node j in the next layer

- set learning rate

- activation function is the sigmoid function

Define the Training Function

- convert inputs list to 2d array

- calculate signals into hidden layer

- calculate the signals emerging from hidden layer

- calculate signals into final output layer

- calculate the signals emerging from final output layer

- output layer error is the (target actual)

- hidden layer error is the output_errors, split by weights, recombined at hidden nodes

- update the weights for the links between the hidden and output layers

- update the weights for the links between the input and hidden layers

Define the Query Function

- convert inputs list to 2d array

- calculate signals into hidden layer

- calculate the signals emerging from hidden layer

- calculate signals into final output layer

- calculate the signals emerging from final output layer

Load Libraries

# for scientific computing with Python

import numpy

# for the sigmoid function expit()

import scipy.special

Develop Neural Network Class

#neural network class definition

class neuralNetwork:

# for scientific computing with Python

import numpy

# for the sigmoid function expit()

import scipy.special

#initialise the neural network

def __init__(self, inputnodes, hiddennodes, outputnodes, learningrate):

#set number of nodes in each input, hidden, output layer

self.inodes = inputnodes

self.hnodes = hiddennodes

self.onodes = outputnodes

#link weight matrices, wih and who

#weights inside the arrays are w_i_j, where link is from node i to node j in the next layer

#w11 w21

#w12 w22 etc

self.wih = numpy.random.normal(0.0, pow(self.hnodes, -0.5), (self.hnodes, self.inodes))

self.who = numpy.random.normal(0.0, pow(self.onodes, -0.5), (self.onodes, self.hnodes))

#learning rate

self.lr = learningrate

#activation function is the sigmoid function

self.activation_function = lambda x: scipy.special.expit(x)

pass

#train the neural network

def train(self, inputs_list, targets_list):

#convert inputs list to 2d array

inputs = numpy.array(inputs_list, ndmin=2).T

targets = numpy.array(targets_list, ndmin=2).T

#calculate signals into hidden layer

hidden_inputs = numpy.dot(self.wih, inputs)

#calculate the signals emerging from hidden layer

hidden_outputs = self.activation_function(hidden_inputs)

#calculate signals into final output layer

final_inputs = numpy.dot(self.who, hidden_outputs)

#calculate the signals emerging from final output layer

final_outputs = self.activation_function(final_inputs)

#output layer error is the (target actual)

output_errors = targets - final_outputs

#hidden layer error is the output_errors, split by weights, recombined at hidden nodes

hidden_errors = numpy.dot(self.who.T, output_errors)

#update the weights for the links between the hidden and output layers

self.who += self.lr * numpy.dot((output_errors * final_outputs * (1.0 - final_outputs)), numpy.transpose(hidden_outputs))

#update the weights for the links between the input and hidden layers

self.wih += self.lr * numpy.dot((hidden_errors * hidden_outputs * (1.0 - hidden_outputs)), numpy.transpose(inputs))

pass

#query the neural network

def query(self, inputs_list):

#convert inputs list to 2d array

inputs = numpy.array(inputs_list, ndmin=2).T

#calculate signals into hidden layer

hidden_inputs = numpy.dot(self.wih, inputs)

#calculate the signals emerging from hidden layer

hidden_outputs = self.activation_function(hidden_inputs)

#calculate signals into final output layer

final_inputs = numpy.dot(self.who, hidden_outputs)

#calculate the signals emerging from final output layer

final_outputs = self.activation_function(final_inputs)

return final_outputs

Run and Output the Result

nn = neuralNetwork(3, 3, 3, .5)

nn.train([1.0, 0.5, -1.5], [1.0, 0.5, -1.5])

nn.query([1.0, 0.5, -1.5])

array([[ 0.4353349 ],

[ 0.64146723],

[ 0.3650745 ]])

Source

Tariq's GitHub: Code for the Make Your Own Neural Network BookBook: Make Your Own Neural Network by Tariq Rashid: Make Your Own Neural Network (GoodReads)

Comments

Post a Comment